Subscribe to receive the latest blog posts to your inbox every week.

By subscribing you agree to with our Privacy Policy.

Manual review is necessary in BFSI onboarding.

But too often, it becomes the default.

A case completes KYC, passes core checks, and still lands in a review queue. Another case has only minor uncertainty, but gets treated with the same friction as a genuinely higher-risk case. Over time, this overloads operations teams, slows onboarding, and makes good cases harder to move quickly.

The problem is usually not that manual review exists.

The problem is that too many cases are being sent there without a clear framework.

That is why banks, NBFCs, and insurers need a stronger view of what should actually trigger manual review in BFSI onboarding.

Manual review in BFSI onboarding should be triggered only when the workflow cannot assign a reliable next action through verification, routing, and decisioning logic alone. In practice, review should be reserved for exception cases with meaningful ambiguity, material inconsistency, elevated risk signals, or control-sensitive outcomes.

That distinction matters because not every unclear case needs human intervention.

Some cases are genuinely risky. Some are incomplete but recoverable. Some are low-friction and should move forward. If all three are sent into the same queue, review stops being a control layer and becomes a bottleneck.

Most onboarding teams already understand that manual review creates delay.

That part is not new.

What matters now is whether review is being used precisely.

If review queues are filled with low-friction, low-value, or poorly segmented cases, three things happen:

So the real question is not whether manual review should exist.

It should.

The real question is: what should actually go to review?

This becomes even more important once onboarding teams move beyond basic verification and start working toward verification intelligence in onboarding.

This is where many workflows break down.

In weak onboarding systems, review gets triggered by:

That is not selective review.

That is review-by-default design.

And once review becomes the fallback for everything the system cannot interpret well, it stops functioning as a precise exception layer.

This is also why manual review dependency in BFSI onboarding remains such a persistent operational issue.

Manual review should exist to handle cases where human judgment adds real value.

That usually means one or more of the following:

In simple terms, manual review should be triggered when the case is not only unclear, but decision-sensitive.

That is a better standard than just “something looks off.”

A stronger review framework also requires clarity on what should not automatically move into manual review.

These cases often do not need full review on their own:

1. Minor signal weakness without broader contradiction

One weak supporting signal should not outweigh an otherwise strong case unless it materially changes the decision context.

2. Incomplete but recoverable inputs

If a case needs clarification or an additional input, that may require a re-verification or follow-up path, not full manual review.

3. Low confidence without meaningful risk

Low confidence can indicate that the system needs more clarity. It does not always mean the case is unsafe.

This is exactly why the distinction between confidence score in BFSI onboarding and actual risk matters.

4. Cases that are valid but not fully prioritised

Some delays happen not because the case needs review, but because the workflow cannot segment and route it properly.

This is why manual review must be designed as an exception path, not a catch-all queue.

A useful framework is to group review triggers into four categories.

1. Material inconsistency

Manual review should be triggered when the case contains contradictions that materially affect trust in the profile.

Examples:

This is where human review is useful because the issue is not missing data alone. It is whether the available data can still support a reliable decision.

2. Elevated risk sensitivity

Some cases may require review because the risk implications are meaningful enough that human oversight is justified.

Examples:

This is not the same as every risky-looking case. The issue is whether the risk signal is strong enough to justify manual intervention rather than automated routing.

This also connects closely to the difference between verification, risk scoring, and decisioning in BFSI.

3. Decision ambiguity with control impact

Some cases are not clearly risky, but the workflow still cannot assign the right next action reliably.

That is where review may be appropriate.

Examples:

This is where review is valuable as a decision-quality layer, not just a verification layer.

This is also the core issue behind what happens after verification in BFSI onboarding.

4. Policy-sensitive exceptions

Some cases require human review because the institution’s policy framework is not meant to be handled through generic routing logic alone.

Examples:

This is one of the clearest places where manual review should remain deliberate and controlled.

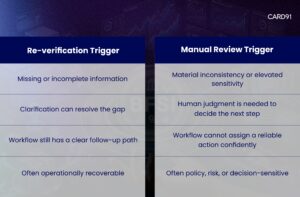

This distinction is critical.

Not every unclear case should go to review. Some should go to re-verification instead.

This matters because many teams overload review queues with cases that really belong in a cleaner re-verification path.

That is also one of the operational gaps discussed in designing a risk-aligned onboarding flow.

When review triggers are too broad, the workflow becomes less useful.

That usually creates five problems:

1. Good cases get delayed

Low-friction applicants are slowed because the system lacks precision.

2. Review queues lose priority quality

Ops teams spend time on cases that should have been handled upstream.

3. True exception cases compete for attention

High-value review cases lose the benefit of focused reviewer attention.

4. Decision consistency weakens

If too many cases depend on human escalation, outcomes become more variable across teams and queues.

5. Scale becomes harder

Growth in onboarding volume creates operational drag instead of improving efficiency.

This is why the goal is not to eliminate manual review.

It is to protect it by using it properly.

Banks and NBFCs with stronger onboarding workflows usually improve five things.

1. They define review triggers clearly

They do not rely on vague escalation language like “send if uncertain.”

2. They separate uncertainty from true exception handling

Not every unclear case needs human review.

3. They distinguish re-verification from review

Missing clarity and decision-sensitive ambiguity are not the same thing.

4. They build routing logic around actionability

The workflow is designed to determine what should happen next, not just what checks were completed.

5. They protect review capacity

Manual review is treated as a limited-value resource for exception cases, not as the system’s default safety net.

This is also why verification, risk scoring, and decisioning cannot be treated as the same layer.

Verification confirms inputs.

Risk scoring helps evaluate exposure.

Decisioning determines what should happen next.

Manual review sits at the edge of that process.

It should be triggered when automated logic cannot reach a sufficiently reliable next action on its own.

That is why better review design depends on better decisioning, not just more checks.

A useful companion read here is why fragmented verification slows BFSI decision-making.

Confidence scoring is useful here because it helps institutions distinguish between:

That distinction matters because many cases enter manual review not because they are truly risky, but because the workflow lacks enough clarity to act.

A stronger framework uses confidence to improve routing before escalation happens.

That helps keep manual review focused on cases where it adds the most value.

Consider two onboarding cases.

Case A

The applicant completes KYC, identity details align, document quality is acceptable, and most supporting signals are consistent. One field is incomplete, but the case is otherwise strong.

Case B

The applicant completes KYC, but multiple supporting signals conflict, the profile contains a material inconsistency, and the workflow cannot determine whether the case should move forward or be restricted.

If both cases enter manual review, the workflow is not distinguishing properly.

Case A may need clarification or re-verification.

Case B is the stronger candidate for manual review.

That is the difference a better decision framework should capture.

CARD91’s onboarding and verification-intelligence direction is aligned with this problem.

Across content on verification intelligence, manual reviews, confidence score, post-verification decisioning, and risk-aligned onboarding, the operating principle is clear: onboarding quality depends on more than completed checks. It depends on whether the workflow can route cases clearly before they become operational bottlenecks.

That matters because manual review works best when the system does more upstream, not less.

For product context, this is where VerifyIQ fits naturally.

As digital onboarding scales across lending, cards, insurance, and account opening, review-heavy workflows become more expensive to sustain.

If too many cases go to review:

That is why the next phase of onboarding improvement is not only about faster forms or more checks.

It is about defining the right review triggers.

Manual review is not the problem.

Poor review design is.

The goal is not to remove review from onboarding.

The goal is to make sure only the right cases reach it: Book a VerifyIQ demo

Q: What should trigger manual review in BFSI onboarding?

A: Manual review should be triggered when a case has material inconsistency, elevated risk sensitivity, meaningful decision ambiguity, or a policy-sensitive exception that requires human judgment.

Q: Should incomplete information always trigger manual review?

A: No. Incomplete information often belongs in a re-verification or clarification path, not in full manual review.

Q: What is the difference between re-verification and manual review?

A: Re-verification is used when missing or incomplete information can still be resolved operationally. Manual review is used when the case requires human judgment to determine the correct next action.

Q: Why do review queues become bottlenecks in digital onboarding?

A: They become bottlenecks when too many low-friction or poorly segmented cases are escalated into review by default.

Q: How can banks and NBFCs reduce unnecessary manual reviews?

A: They can reduce unnecessary reviews by defining review triggers clearly, improving routing logic, separating re-verification from review, and using stronger decision frameworks.

To know more about our offerings connect with our experts

Sales: sales@card91.io

HR: careers@card91.io

Media: comms@card91.io

Support: support@card91.io